Article Purpose

It is the objective of this article to explore and provide a discussion based in the concept of Edge Detection through means of Image Sharpening. Illustrated are various methods of image sharpening and in addition a Median filter implemented in image noise reduction.

Sample Source Code

This article is accompanied by a sample source code Visual Studio project which is available for download here.

Using the Sample Application

The sample source code accompanying this article includes a Windows Forms based Sample Application. The concepts illustrated throughout this article can easily be tested and replicated by making use of the Sample Application.

The Sample Application exposes seven main areas of functionality:

- Loading input/source images.

- Saving image result.

- Sharpen Filters

- Median Filter Size

- Threshold value

- Grayscale Source

- Mono Output

When using the Sample application users are able to select input/source images from the local file system by clicking the Load Image button. If desired, users may save result images to the local file system by clicking the Save Image button.

The sample source code and sample application implement various methods of Image Sharpening. Each method of image sharpening results in varying degrees of image edge detection. Some methods are more effective than other methods. The image sharpening method being implemented serves as a primary factor influencing edge detection results. The effectiveness of the selected image sharpening method is reliant on the input/source image provided. The sample application implements the following image sharpening methods:

- Sharpen5To4

- Sharpen7To1

- Sharpen9To1

- Sharpen12To1

- Sharpen24To1

- Sharpen48To1

- Sharpen10To8

- Sharpen11To8

- Sharpen821

Image noise is regarded as a common problem relating to image edge detection. Often image noise will be incorrectly detected as forming part of an edge within an image. The sample source code implements a Median filter in order to counter act image noise. The size/intensity of the Median filter applied can be specified via the combobox labelled Median Filter Size.

The Threshold value configured through the sample application’s user interface has a two-fold implementation. In a scenario where output images are created in a black and white format the Threshold value will be implemented to determine whether a pixel should be either black or white. When output images are created as full colour images the Threshold value will be added to each pixel, acting as a bias value.

In some scenarios Image Edge Detection can be achieved more effectively when specifying grayscale format source/input images. The purpose of the checkbox labelled Grayscale Source is to format source/input images in a grayscale format before implementing image edge detection.

The checkbox labelled Mono Output, when selected, has the effect of producing result images in a black and white format.

The image below is a screenshot of the Sharpen Edge Detection sample application in action:

Edge Detection through Image Sharpening

The sample source code performs edge detection on source/input images by means of image sharpening. The steps performed can be broken down to the following items:

- If specified, apply a Median filter to the input/source image. A Median filter results in smoothing an image. Image noise can be reduced when implementing a Median Filter. Image smoothing/blurring often results reducing image details/definition. The Median Filter is well suited to smoothing away image noise whilst implementing edge preservation. When performing edge detection the Median filter functions as an ideal method of reducing image noise whilst not negatively impacting edge detection tasks.

- If specified, convert the source/input image to grayscale by iterating each pixel that forms part of the image. Each pixel’s colour components are calculated multiplying by factor values: Red x 0.3 Green x 0.59 Blue x 0.11.

- Using the specified matrix kernel iterate each pixel forming part of the source/input image, performing image convolution on each pixel colour channel.

- If the output image has been specified as Mono, the middle pixel calculated in convolution should be multiplied with the specified factor value. Each colour component should be compared to the specified threshold value and be assigned as either black or white.

- If the output image has not been specified as Mono, the middle pixel calculated in convolution should be multiplied with the factor value to which the threshold/bias value should be added. The value of each colour component will be set to the result of subtracting the calculated convolution/filter/bias value from the pixel’s original colour component value. In other words perform image sharpening using convolution applying a factor and bias which should then be subtracted from the original source/input image.

Implementing Sharpen Edge Detection

The sample source code achieves edge detection through image sharpening by implementing three methods: MedianFilter and two overloaded methods titled SharpenEdgeDetect.

The MedianFilter method is defined as an extension method targeting the Bitmap class. The definition as follows:

public static Bitmap MedianFilter(this Bitmap sourceBitmap, int matrixSize) { BitmapData sourceData = sourceBitmap.LockBits(new Rectangle(0, 0, sourceBitmap.Width, sourceBitmap.Height), ImageLockMode.ReadOnly, PixelFormat.Format32bppArgb);

byte[] pixelBuffer = new byte[sourceData.Stride * sourceData.Height];

byte[] resultBuffer = new byte[sourceData.Stride * sourceData.Height];

Marshal.Copy(sourceData.Scan0, pixelBuffer, 0, pixelBuffer.Length);

sourceBitmap.UnlockBits(sourceData);

int filterOffset = (matrixSize - 1) / 2; int calcOffset = 0;

int byteOffset = 0;

List<int> neighbourPixels = new List<int>(); byte[] middlePixel;

for (int offsetY = filterOffset; offsetY < sourceBitmap.Height - filterOffset; offsetY++) { for (int offsetX = filterOffset; offsetX < sourceBitmap.Width - filterOffset; offsetX++) { byteOffset = offsetY * sourceData.Stride + offsetX * 4;

neighbourPixels.Clear();

for (int filterY = -filterOffset; filterY <= filterOffset; filterY++) { for (int filterX = -filterOffset; filterX <= filterOffset; filterX++) {

calcOffset = byteOffset + (filterX * 4) + (filterY * sourceData.Stride);

neighbourPixels.Add(BitConverter.ToInt32( pixelBuffer, calcOffset)); } }

neighbourPixels.Sort(); middlePixel = BitConverter.GetBytes( neighbourPixels[filterOffset]);

resultBuffer[byteOffset] = middlePixel[0]; resultBuffer[byteOffset + 1] = middlePixel[1]; resultBuffer[byteOffset + 2] = middlePixel[2]; resultBuffer[byteOffset + 3] = middlePixel[3]; } }

Bitmap resultBitmap = new Bitmap (sourceBitmap.Width, sourceBitmap.Height);

BitmapData resultData = resultBitmap.LockBits(new Rectangle (0, 0, resultBitmap.Width, resultBitmap.Height), ImageLockMode.WriteOnly, PixelFormat.Format32bppArgb);

Marshal.Copy(resultBuffer, 0, resultData.Scan0, resultBuffer.Length);

resultBitmap.UnlockBits(resultData);

return resultBitmap; }

The public implementation of the SharpenEdgeDetect extension method has the purpose of translating user specified options into the relevant method calls to the private implementation of the SharpenEdgeDetect extension method. The public implementation of the SharpenEdgeDetect method as follows:

public static Bitmap SharpenEdgeDetect(this Bitmap sourceBitmap, SharpenType sharpen, int bias = 0, bool grayscale = false, bool mono = false, int medianFilterSize = 0) { Bitmap resultBitmap = null;

if (medianFilterSize == 0) { resultBitmap = sourceBitmap; } else { resultBitmap = sourceBitmap.MedianFilter(medianFilterSize); }

switch (sharpen) { case SharpenType.Sharpen7To1: { resultBitmap = resultBitmap.SharpenEdgeDetect( Matrix.Sharpen7To1, 1.0, bias, grayscale, mono); } break; case SharpenType.Sharpen9To1: { resultBitmap = resultBitmap.SharpenEdgeDetect( Matrix.Sharpen9To1, 1.0, bias, grayscale, mono); } break; case SharpenType.Sharpen12To1: { resultBitmap = resultBitmap.SharpenEdgeDetect( Matrix.Sharpen12To1, 1.0, bias, grayscale, mono); } break; case SharpenType.Sharpen24To1: { resultBitmap = resultBitmap.SharpenEdgeDetect( Matrix.Sharpen24To1, 1.0, bias, grayscale, mono); } break; case SharpenType.Sharpen48To1: { resultBitmap = resultBitmap.SharpenEdgeDetect( Matrix.Sharpen48To1, 1.0, bias, grayscale, mono); } break; case SharpenType.Sharpen5To4: { resultBitmap = resultBitmap.SharpenEdgeDetect( Matrix.Sharpen5To4, 1.0, bias, grayscale, mono); } break; case SharpenType.Sharpen10To8: { resultBitmap = resultBitmap.SharpenEdgeDetect( Matrix.Sharpen10To8, 1.0, bias, grayscale, mono); } break; case SharpenType.Sharpen11To8: { resultBitmap = resultBitmap.SharpenEdgeDetect( Matrix.Sharpen11To8, 3.0 / 1.0, bias, grayscale, mono); } break; case SharpenType.Sharpen821: { resultBitmap = resultBitmap.SharpenEdgeDetect( Matrix.Sharpen821, 8.0 / 1.0, bias, grayscale, mono); } break; }

return resultBitmap; }

The Matrix class provides the definition of static pre-defined matrix kernel values. The definition as follows:

public static class Matrix { public static double[,] Sharpen7To1 { get { return new double[,] { { 1, 1, 1, }, { 1, -7, 1, }, { 1, 1, 1, }, }; } }

public static double[,] Sharpen9To1 { get { return new double[,] { { -1, -1, -1, }, { -1, 9, -1, }, { -1, -1, -1, }, }; } }

public static double[,] Sharpen12To1 { get { return new double[,] { { -1, -1, -1, }, { -1, 12, -1, }, { -1, -1, -1, }, }; } }

public static double[,] Sharpen24To1 { get { return new double[,] { { -1, -1, -1, -1, -1, }, { -1, -1, -1, -1, -1, }, { -1, -1, 24, -1, -1, }, { -1, -1, -1, -1, -1, }, { -1, -1, -1, -1, -1, }, }; } }

public static double[,] Sharpen48To1 { get { return new double[,] { { -1, -1, -1, -1, -1, -1, -1, }, { -1, -1, -1, -1, -1, -1, -1, }, { -1, -1, -1, -1, -1, -1, -1, }, { -1, -1, -1, 48, -1, -1, -1, }, { -1, -1, -1, -1, -1, -1, -1, }, { -1, -1, -1, -1, -1, -1, -1, }, { -1, -1, -1, -1, -1, -1, -1, }, }; } }

public static double[,] Sharpen5To4 { get { return new double[,] { { 0, -1, 0, }, { -1, 5, -1, }, { 0, -1, 0, }, }; } }

public static double[,] Sharpen10To8 { get { return new double[,] { { 0, -2, 0, }, { -2, 10, -2, }, { 0, -2, 0, }, }; } }

public static double[,] Sharpen11To8 { get { return new double[,] { { 0, -2, 0, }, { -2, 11, -2, }, { 0, -2, 0, }, }; } }

public static double[,] Sharpen821 { get { return new double[,] { { -1, -1, -1, -1, -1, }, { -1, 2, 2, 2, -1, }, { -1, 2, 8, 2, 1, }, { -1, 2, 2, 2, -1, }, { -1, -1, -1, -1, -1, }, }; } } }

The private implementation of the SharpenEdgeDetect extension method performs image sharpening through convolution and then performs image subtraction. The definition as follows:

private static Bitmap SharpenEdgeDetect(this Bitmap sourceBitmap, double[,] filterMatrix, double factor = 1, int bias = 0, bool grayscale = false, bool mono = false) { BitmapData sourceData = sourceBitmap.LockBits(new Rectangle(0, 0, sourceBitmap.Width, sourceBitmap.Height), ImageLockMode.ReadOnly, PixelFormat.Format32bppArgb);

byte[] pixelBuffer = new byte[sourceData.Stride * sourceData.Height]; byte[] resultBuffer = new byte[sourceData.Stride * sourceData.Height];

Marshal.Copy(sourceData.Scan0, pixelBuffer, 0, pixelBuffer.Length); sourceBitmap.UnlockBits(sourceData);

if (grayscale == true) { for (int pixel = 0; pixel < pixelBuffer.Length; pixel += 4) { pixelBuffer[pixel] = (byte)(pixelBuffer[pixel] * 0.11f);

pixelBuffer[pixel + 1] = (byte)(pixelBuffer[pixel + 1] * 0.59f);

pixelBuffer[pixel + 2] = (byte)(pixelBuffer[pixel + 2] * 0.3f); } }

double blue = 0.0; double green = 0.0; double red = 0.0;

int filterWidth = filterMatrix.GetLength(1); int filterHeight = filterMatrix.GetLength(0);

int filterOffset = (filterWidth - 1) / 2; int calcOffset = 0;

int byteOffset = 0;

for (int offsetY = filterOffset; offsetY < sourceBitmap.Height - filterOffset; offsetY++) { for (int offsetX = filterOffset; offsetX < sourceBitmap.Width - filterOffset; offsetX++) { blue = 0; green = 0; red = 0;

byteOffset = offsetY * sourceData.Stride + offsetX * 4;

for (int filterY = -filterOffset; filterY <= filterOffset; filterY++) { for (int filterX = -filterOffset; filterX <= filterOffset; filterX++) { calcOffset = byteOffset + (filterX * 4) + (filterY * sourceData.Stride);

blue += (double )(pixelBuffer[calcOffset]) * filterMatrix[filterY + filterOffset, filterX + filterOffset];

green += (double )(pixelBuffer[calcOffset + 1]) * filterMatrix[filterY + filterOffset, filterX + filterOffset];

red += (double )(pixelBuffer[calcOffset + 2]) * filterMatrix[filterY + filterOffset, filterX + filterOffset]; } }

if (mono == true) { blue = resultBuffer[byteOffset] - factor * blue; green = resultBuffer[byteOffset + 1] - factor * green; red = resultBuffer[byteOffset + 2] - factor * red;

blue = (blue > bias ? 255 : 0);

green = (blue > bias ? 255 : 0);

red = (blue > bias ? 255 : 0); } else { blue = resultBuffer[byteOffset] - factor * blue + bias;

green = resultBuffer[byteOffset + 1] - factor * green + bias;

red = resultBuffer[byteOffset + 2] - factor * red + bias;

blue = (blue > 255 ? 255 : (blue < 0 ? 0 : blue));

green = (green > 255 ? 255 : (green < 0 ? 0 : green));

red = (red > 255 ? 255 : (red < 0 ? 0 : red)); }

resultBuffer[byteOffset] = (byte)(blue); resultBuffer[byteOffset + 1] = (byte)(green); resultBuffer[byteOffset + 2] = (byte)(red); resultBuffer[byteOffset + 3] = 255; } }

Bitmap resultBitmap = new Bitmap(sourceBitmap.Width, sourceBitmap.Height); BitmapData resultData = resultBitmap.LockBits(new Rectangle(0, 0, resultBitmap.Width, resultBitmap.Height), ImageLockMode.WriteOnly, PixelFormat.Format32bppArgb);

Marshal.Copy(resultBuffer, 0, resultData.Scan0, resultBuffer.Length); resultBitmap.UnlockBits(resultData);

return resultBitmap; }

Sample Images

The sample image used in this article is in the public domain because its copyright has expired. This applies to Australia, the European Union and those countries with a copyright term of life of the author plus 70 years. The original image can be downloaded from Wikipedia.

The Original Image

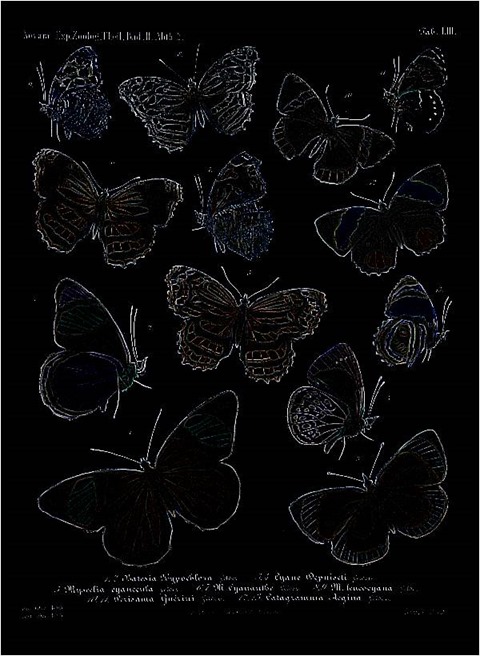

Sharpen5To4, Median 0, Threshold 0

Sharpen5To4, Median 0, Threshold 0, Mono

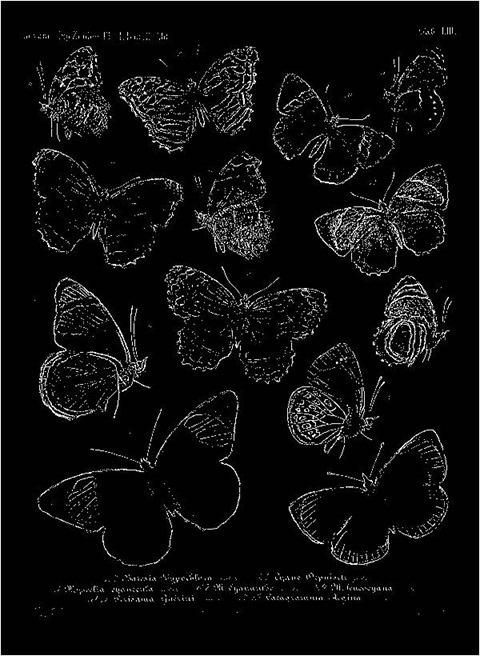

Sharpen7To1, Median 0, Threshold 0

Sharpen7To1, Median 0, Threshold 0, Mono

Sharpen9To1, Median 0, Threshold 0

Sharpen9To1, Median 0, Threshold 0, Mono

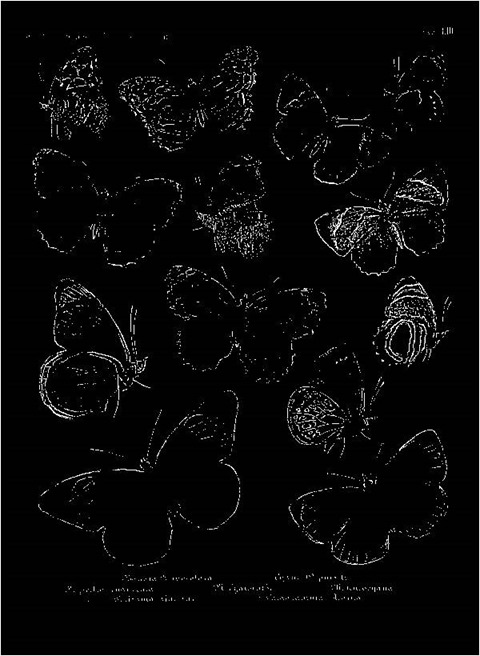

Sharpen10To8, Median 0, Threshold 0

Sharpen10To8, Median 0, Threshold 0, Mono

Sharpen11To8, Median 0, Threshold 0

Sharpen11To8, Median 0, Threshold 0, Grayscale, Mono

Sharpen12To1, Median 0, Threshold 0

Sharpen12To1, Median 0, Threshold 0, Mono

Sharpen24To1, Median 0, Threshold 0

Sharpen24To1, Median 0, Threshold 0, Grayscale, Mono

Sharpen24To1, Median 0, Threshold 0, Mono

Sharpen24To1, Median 0, Threshold 21, Grayscale, Mono

Sharpen48To1, Median 0, Threshold 0

Sharpen48To1, Median 0, Threshold 0, Grayscale, Mono

Sharpen48To1, Median 0, Threshold 0, Mono

Sharpen48To1, Median 0, Threshold 226, Mono

Related Articles and Feedback

Feedback and questions are always encouraged. If you know of an alternative implementation or have ideas on a more efficient implementation please share in the comments section.

I’ve published a number of articles related to imaging and images of which you can find URL links here:

- C# How to: Image filtering by directly manipulating Pixel ARGB values

- C# How to: Image filtering implemented using a ColorMatrix

- C# How to: Blending Bitmap images using colour filters

- C# How to: Bitmap Colour Substitution implementing thresholds

- C# How to: Generating Icons from Images

- C# How to: Swapping Bitmap ARGB Colour Channels

- C# How to: Bitmap Pixel manipulation using LINQ Queries

- C# How to: Linq to Bitmaps – Partial Colour Inversion

- C# How to: Bitmap Colour Balance

- C# How to: Bi-tonal Bitmaps

- C# How to: Bitmap Colour Tint

- C# How to: Bitmap Colour Shading

- C# How to: Image Solarise

- C# How to: Image Contrast

- C# How to: Bitwise Bitmap Blending

- C# How to: Image Arithmetic

- C# How to: Image Convolution

- C# How to: Image Edge Detection

- C# How to: Difference Of Gaussians

- C# How to: Image Median Filter

- C# How to: Image Unsharp Mask

- C# How to: Image Colour Average

- C# How to: Image Erosion and Dilation

- C# How to: Morphological Edge Detection

- C# How to: Boolean Edge Detection

- C# How to: Gradient Based Edge Detection

30 Responses to “C# How to: Sharpen Edge Detection”